- Blog

- Cutting Through the Noise: 2 generative AI threats to prepare for

Cutting Through the Noise: 2 generative AI threats to prepare for

Discover the two generative AI threats that fraud prevention teams need to pay attention to now, and how location intelligence can help you prepare for them.

Subscribe to Incognia’s content

Excitement and fear about generative AI are sky-high right now.

In particular, there’s a huge amount of buzz about its potential for fraudulent uses. Warnings and opinions are flying left and right.

Cybersecurity thought leaders have sounded the alarm about a number of potential future threats related to AI, like:

-

Generative AI being used to write malware from scratch

-

Chatbots staging social engineering attacks against everyday consumers

-

The use of prompt interjection to convince AI to divulge confidential information

But generative AI is not just a future threat: It’s also a current one. And given the amount of noise out there about this topic right now, I imagine fraud prevention, account security, and Trust & Safety professionals may be confused about which AI-related threats you should be preparing for first.

There are two specific generative AI threats that your team should actually be getting ready for now:

Chatbots and deepfakes.

Generative AI chatbots & the threat they pose to fraud fighters

Generative AI chatbots are sophisticated AI programs that simulate conversation with a human user. The person communicating with the chatbot types their prompt or query, and the chatbot sends back a text response like a human would.

These chatbots can also be asked to do things like completing tasks or emulating certain personalities or characteristics in their responses.

In the hands of a well-meaning person, chatbots might be used to do things like generating ideas or kickstarting a brainstorming session. But in the hands of bad actors, a chatbot becomes a tool for scaling fraud and amplifying attack capabilities.

Take phishing as an example. After efforts to build awareness in recent years, many of us now know that we shouldn’t open suspicious emails, click on unknown links or give out our credentials over unofficial channels. But generations that are less digitally savvy still fall victim to scams.

Phishing is a numbers game for fraudsters. The more messages they send, the wider the net they can cast and the higher their chances of finding a victim. Asking chatbots to draft phishing messages for them enables fraudsters to get scams off the ground with fewer resources, making it easier to scale.

What’s more, generative AI chatbots are actually really good at crafting well-written, believable messages. Fraudsters often give themselves away by making spelling or grammar mistakes or using awkward language. But with AI, fraudsters can easily generate impeccably written, compelling messages that don’t include any of the tell-tale grammar, spelling, or wording mistakes that often set off alarm bells in a reader’s head.

Starting in April 2023, a company called AI21 conducted the largest Turing test experiment to date. They used AI chatbots to test how often humans could correctly identify whether they were talking to a real person or an AI.

Out of ten million conversations, the human users guessed correctly around 68% of the time, which means that AI was able to successfully fool a person into thinking it was human about a third of the time.

These sorts of experiments prove that we can’t rely on users alone to keep their accounts secure. Security professionals need to develop better systems that ensure only authorized users gain account access.

Key TakeAways

- While there are many potential generative AI threats in discussion, the most relevant for fraud fighters are chatbots and deepfakes

- Chatbots enable fraudsters to expand the reach of phishing and other social engineering schemes, while deepfakes pose a threat to biometric authentication

- Multi-factor authentication, especially with passive signals like spoof-resistant device intelligence, can help secure users even in a post-generative AI era

Protecting accounts against AI-backed phishing attacks

One way to safeguard an account, even in the event that a fraudster successfully phishes the account credentials, is to have more than one authentication factor in place. That way, if one factor fails, the other one is there to sound the alarm.

However, getting users to opt-into multi-factor authentication (MFA) isn’t always easy; a study from Indiana University found that the key reasons people choose not to bother with MFA include a false sense of security and lack of motivation. As one of the researchers said, “You can enjoy driving the car, but you're not going to enjoy putting on your seat belt.”

The key is to reduce the friction of MFA as much as possible in order to encourage users to opt-in. And this is where passive or background authentication factors can make a huge difference. After all, users are much more likely to buy into implementing additional account security if it doesn’t require any extra effort from them.

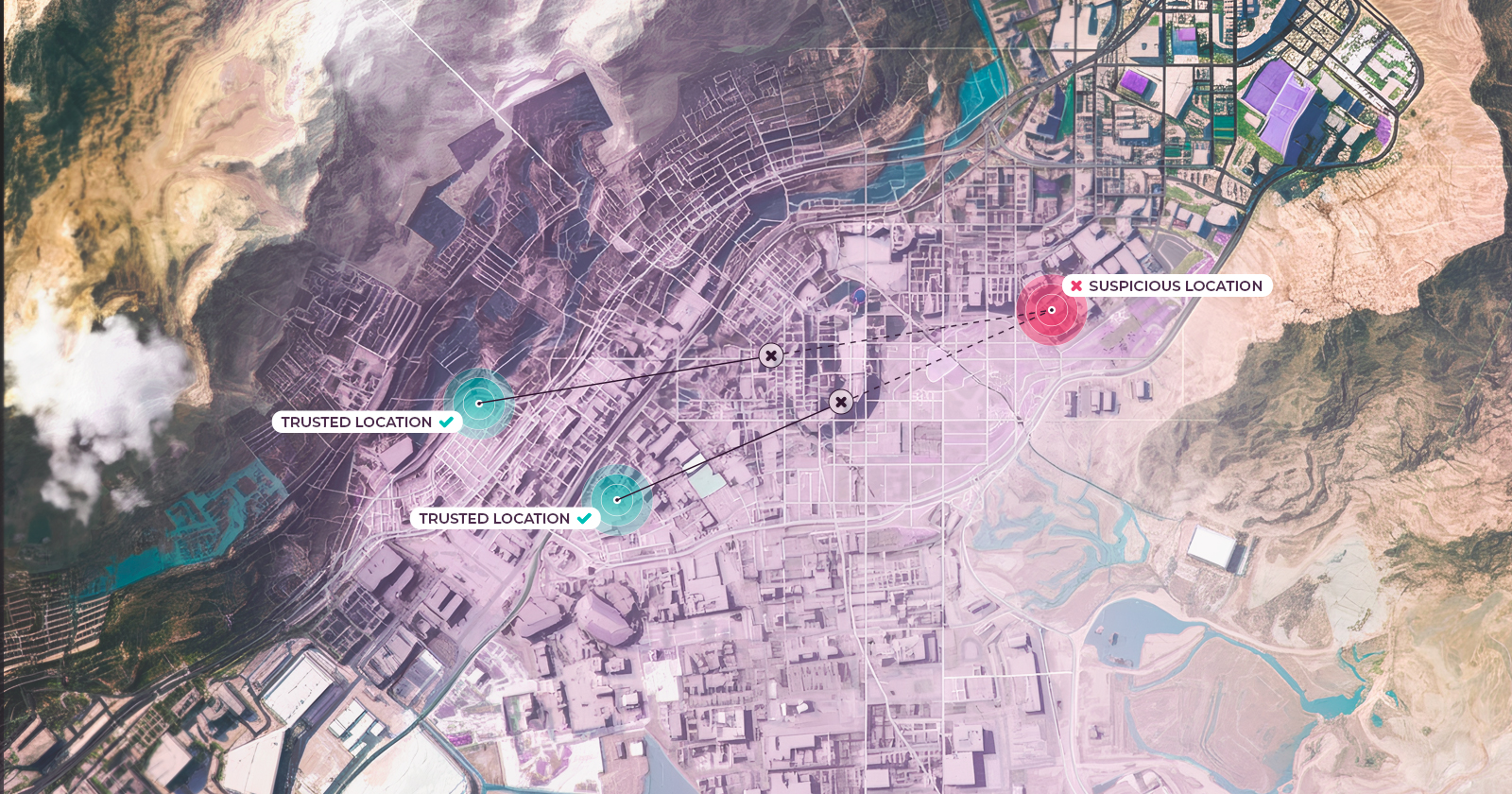

Location intelligence is one passive authentication factor that’s extremely accurate at assessing the legitimacy of login attempts. For example, Incognia’s Trusted Location solution can detect a fraudulent login attempt by identifying the device and determining the exact location where the login was initiated.

If the device is new and is accessing the account from a location that has never been associated with that user, then that login should be challenged. Incognia also builds a watchlist of devices and locations that were associated with fraud in the past, enabling platforms to block those users preemptively.

In addition to being passive, location intelligence is phishing-resistant. This means that even if a fraudster gains access to account credentials or intercepts an SMS verification code, they would still be flagged by Incognia as suspicious because of the device and location intelligence. In addition, having an authentication factor beyond biometrics, which may be vulnerable to impersonation attacks from AI, helps secure accounts even in the event that the AI successfully fools a voice or facial recognition system.

AI chatbots enable fraudsters to cast a wider net, but even the widest net can’t catch a fish that sees it coming.

Deepfakes & what they mean for the future of fraud prevention

By now many of us have seen the infamous Tom Cruise deepfake videos, but the threat posed by deepfakes goes much deeper than hoax videos posted on social media.

As deepfakes grow more sophisticated, it’ll become much harder to secure biometric authentication processes like facial recognition against them. Adding generative AI into the mix means that deepfakes will also require less effort to produce than previously.

So how can we prevent deepfakes from overcoming account security?

I think it’s necessary to implement a layered security approach, including leveraging multi-factor authentication and device integrity checks.

Let’s look closer at one of those techniques: device integrity checks.

Device integrity checks can act as early interventions

Device integrity checks are an upstream intervention that can help measure the level of risk posed by a certain device. How does this work?

As an example, in order to fool facial recognition technology, fraudsters need to be able to override the device’s camera hardware. They use app tampering tools and emulators to do this bypass, which means that devices with these types of programs enabled are inherently higher risk. A device integrity check can detect the presence of these programs on the device and warn you that it’s a risky device that shouldn’t be trusted.

It’s a bit like catching someone outside of a house with a set of lockpicks—you don’t need to wait until they pick the lock to understand their intention.

Facing the brave new world

Artificial intelligence technology is advancing at a breakneck speed, but that doesn’t mean that it has to outpace fraud detection and prevention. Amid all of the hubbub surrounding generative AI, deepfake spoofing attacks and chatbot phishing are two threats that you can and should prepare for now. They both have concrete fraud use cases, but they also have countermeasures: phishing-resistant authentication factors and device integrity checks for risk assessment.

By implementing protections against AI-backed fraud attacks today, platforms can avoid account takeover incidents in the future.