Recent posts

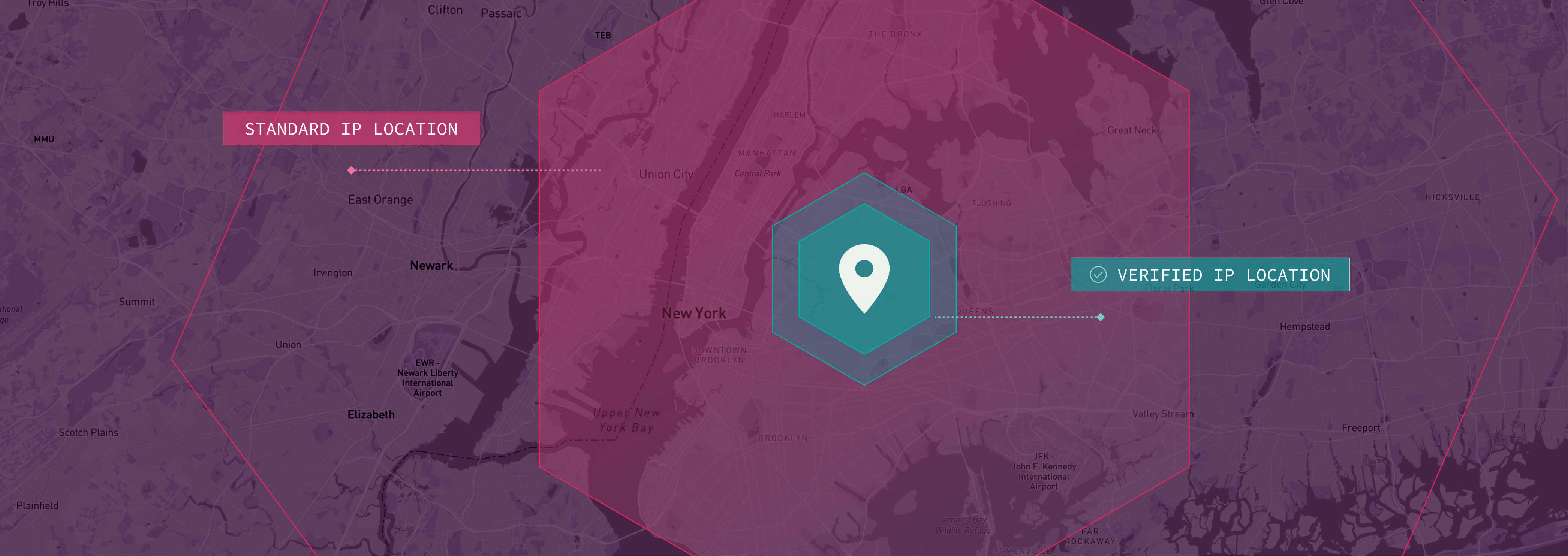

Verified IP Location: Know Where Your Web Traffic is Actually Coming From

Enhance security with Incognia's Cross Device...

Cross Device Authentication: Verify the Right Person in the Right Place

Enhance security with Incognia's Cross Device...

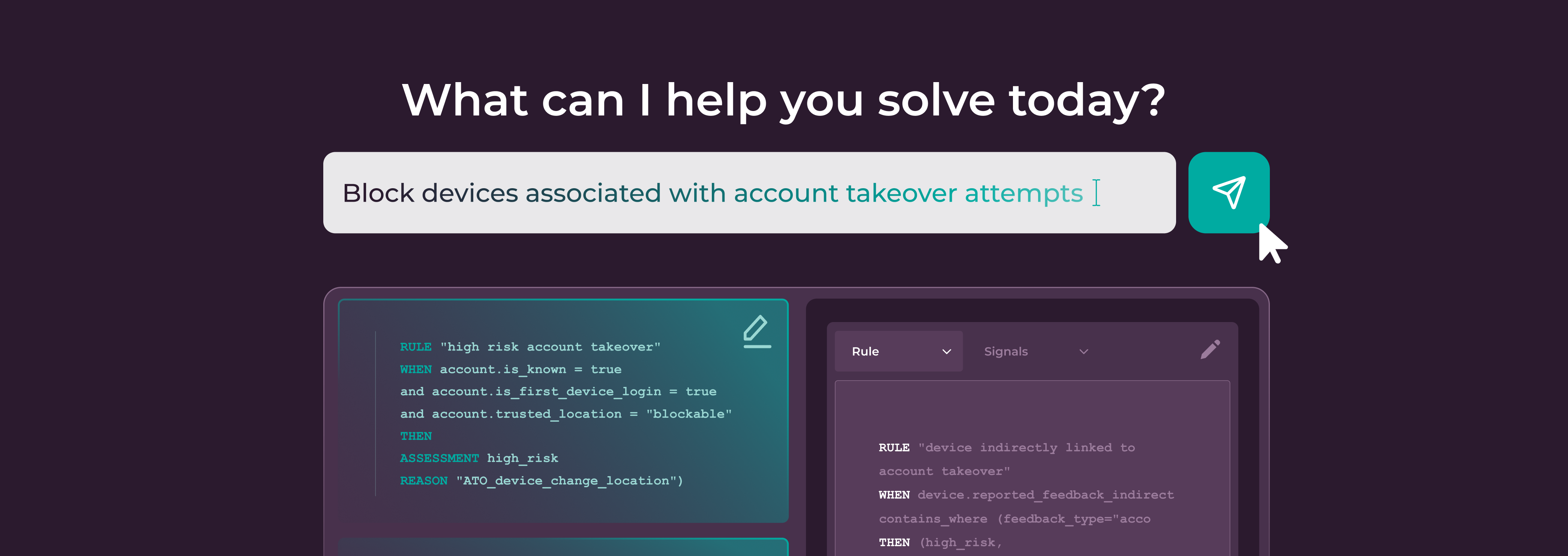

AI Rule Builder: The Next Innovation in How Fraud Rules Get Built

Discover how Incognia's AI Rule Builder revolutionizes...

Why Incognia’s AI-Powered Browser ID is the Next Standard for Web Identity

Discover how Incognia's AI-powered Browser ID redefines web...

Incognia’s Network Graph: Persistent Device ID for Faster Fraud Investigations

Persistent and tamper-resistant device IDs in Incognia's...

How Marketplaces Can Outsmart Seller Fraud in 2026

Discover how to combat sophisticated seller fraud in 2026...